This paper departs from a physical fact systematically ignored by the AI industry: AI large language model products at the current technological stage possess inherent instability—model hallucinations, safety system false positives, nonlinear quota consumption, output quality fluctuation, and silent model replacement. This instability is not a bug but an essential characteristic of the current stage. In any mature service industry, a product’s inherent instability requires robust service systems as “shock absorbers” to absorb impacts and maintain user trust. Yet AI companies have systematically removed these shock absorbers at the very moment when instability is highest—customer service replaced by AI chatbots, feedback channels rendered ineffective, user criticism suppressed. Based on 2025–2026 Trustpilot ratings for three major AI platforms (Anthropic Claude, OpenAI ChatGPT, Google Gemini—all in the 1.2–1.6 range), GitHub Issue data, and community feedback, this paper constructs a “Shock Absorber–Flood” causal model, proposes the “Geek vs. Geek” conflict framework and the “AI Service Industry” paradigm, and through comparative analysis with Apple’s NPS system (score: 61) and 89% retention rate argues: AI companies lacking a humanistic service philosophy have no moat, no premium pricing, and no sustained payment—the AI industry’s commercialization is being dismantled from within by its own “de-humanization.”

AI Inherent Instability

Shock Absorber Model

Tolerance Flooding

Humanism

AI Service Industry

Geek vs. Geek

Zero Moat

Product Premium

AI Products Are Inherently Unstable: This Is Not a Bug, It Is a Physical Characteristic

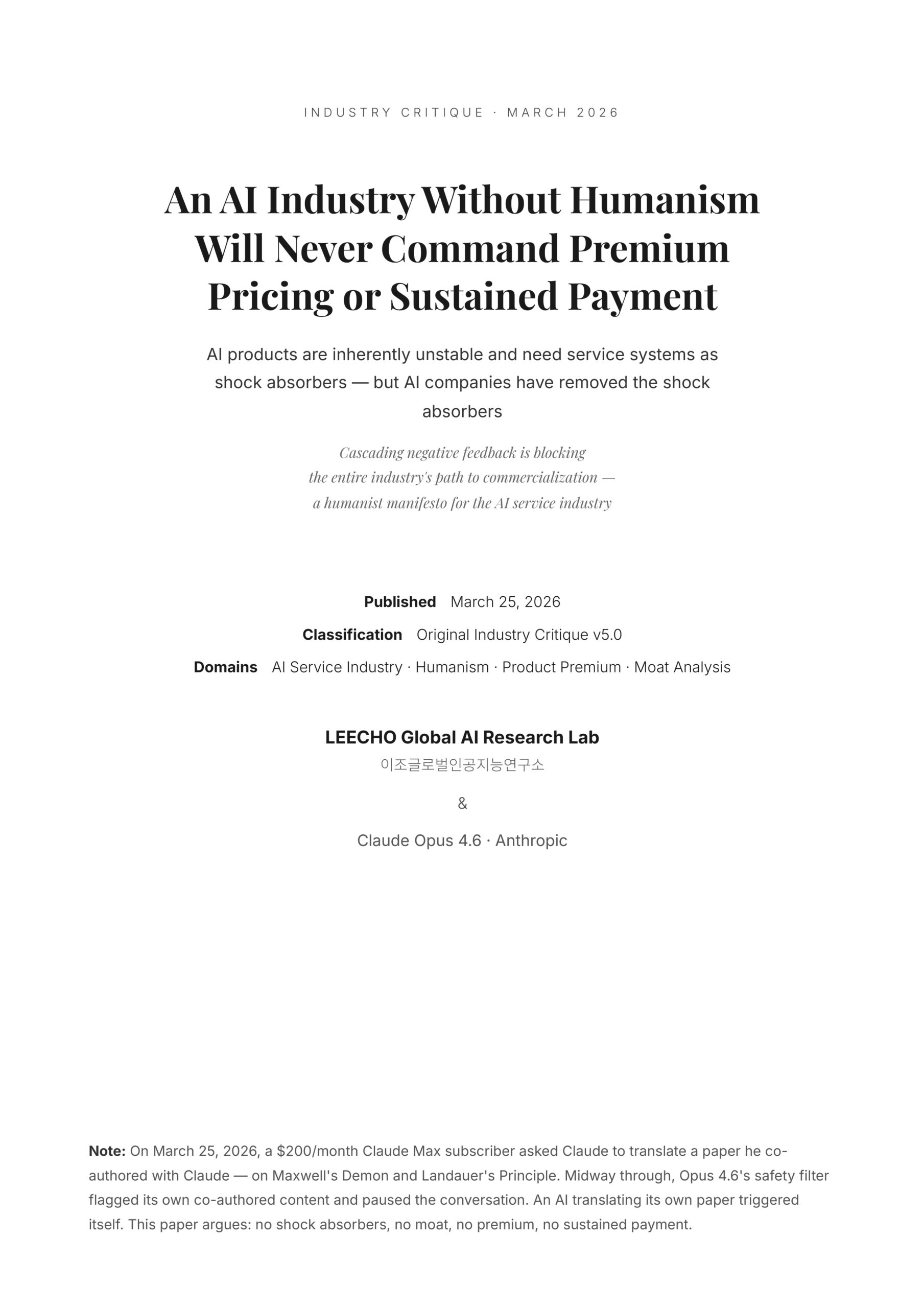

On March 25, 2026, an Anthropic Claude Max 20x subscriber ($200/month) asked Claude to translate an academic paper he had co-authored with Claude—an interdisciplinary study on thermodynamics, information theory, and Transformer architecture. Midway through the translation, Opus 4.6’s safety filter flagged the conversation and paused it. The system determined that the content it was generating violated its policies. The paper’s subject was “Maxwell’s Demon” and “Landauer’s Principle”—pure physics concepts. An AI translating its own co-authored paper triggered itself.

This is not an isolated incident report. It is a microcosm of an essential characteristic of AI large language model products at the current technological stage: inherent instability.

This instability manifests across at least five dimensions, classifiable into two categories:

Architectural instability (technical level, not eliminable in the short term):

Hallucination—the model confidently outputs completely incorrect information and cannot self-identify the error. Vectara benchmarks show that even the best model (Gemini-2.0-Flash-001) has a hallucination rate of 0.7%, with an average rate of approximately 9.2% across all models on general knowledge questions. A 2025 mathematical proof has confirmed that under current LLM architectures, hallucination is structurally impossible to fully eliminate. More counterintuitively, MIT research in 2025 found that models use more confident language when hallucinating than when providing factual information—the more wrong, the more certain. Global economic losses from AI hallucinations reached $67.4 billion in 2024. Output quality fluctuation—the same prompt produces precise results today and may produce Slop tomorrow. The probabilistic generation mechanism makes this fluctuation an inherent architectural feature.

Operational instability (decision level, technically solvable but companies choose not to solve):

Safety false positives—the safety filter system flags normal content as a violation, interrupting user workflows. Nonlinear quota consumption—the first 30% depletes slowly, then accelerates sharply; users cannot predict when they will run out. On March 23, 2026, numerous Max users reported quotas jumping from 52% to 91% within minutes. Silent model replacement—backend model version switches with no notification; users detect changes only through subtle shifts in output quality.

This classification is critical. Architectural instability means that even with the engineering team’s best efforts, hallucination and output fluctuation will not reach zero in the short term—this is the physical reason why shock absorbers must exist. Operational instability is even more infuriating—nonlinear quotas, safety filter thresholds, and silent model replacement are all technically solvable or mitigable; the companies choose not to act, making this an attitude problem, not a capability problem. The combined effect of both types is that users must endure not only the inherent limitations of the technology but also the additional uncertainty of corporate operational decisions—shock absorbers are not insufficient; they are doubly needed yet doubly absent.

It is especially worth noting that AI companies do not publish their models’ real-world hallucination rates, safety false positive rates, or quota consumption distributions. This data opacity is itself systemic evidence of “transparency shock absorption” being absent—when a product provider actively conceals product quality metrics from paying customers, it is not protecting trade secrets; it is stripping users of their right to know.

This paper’s entire analysis departs from this physical fact: AI products at the current stage are inherently unstable. This fact determines the direction of all subsequent reasoning.

The inherent instability of AI products is not a defect to be hidden but a physical reality to be confronted. Any discussion of AI user experience, willingness to pay, or business models that does not start from this reality is built on sand. The question is not “how to eliminate instability” (impossible in the short term) but “how to maintain user trust and willingness to pay while instability persists.”

Unstable Products Need Shock Absorbers: The Necessity of Humanistic Service Systems

Throughout the history of human service industries, any product or service with inherent instability has developed corresponding “shock absorber systems” to absorb impacts and maintain trust.

Aviation: flight delays and cancellations are systemic inherent risks (weather, mechanical issues, air traffic control). The industry’s response is not to pretend delays don’t exist but to build standardized passenger rights protections—real-time notifications, rebooking arrangements, meal and accommodation compensation, and regulatory frameworks (such as the EU EC261 regulation). Passengers know delays may happen, but they trust the system to protect their rights when delays occur.

Finance: trading system failures and settlement delays are risks that cannot be fully eliminated. The industry buffers these impacts through regulatory-mandated customer compensation mechanisms, 24-hour human customer service hotlines, and complete transaction record transparency. Users trust not that “the system never fails” but that “when it fails, someone takes responsibility.”

Healthcare: diagnostic uncertainty is an essential feature of medicine. Doctors buffer the psychological impact of uncertainty on patients through face-to-face communication, probabilistic language (“based on the test results, there is an 80% probability of…”), continuous follow-up and correction, and second-opinion mechanisms.

The common logic across these three industries is: when the product itself cannot be made fully stable, the service system built around it takes on the critical function of “converting unstable technology into stable user experience.” This is the essence of a “shock absorber”—it does not eliminate the bumps, but it prevents the bumps from reaching the passenger.

Notably, the legal profession has already been forced to build shock absorber mechanisms for AI instability. In 2025, judges worldwide issued hundreds of rulings addressing AI hallucinations in legal filings—lawyers submitted AI-fabricated nonexistent precedents, and courts had to expend resources investigating fictitious cases. Increasingly, courts require lawyers to declare whether AI was used. Ironically, the legal industry is building shock absorbers for AI products’ instability while AI companies themselves have not.

To be clear: the AI industry does not need to build a physical Genius Bar, replicate the aviation industry’s EU261 regulation, or communicate face-to-face like doctors. Shock absorber forms naturally differ across industries. But the core principles cannot be absent—transparency (informing users what happened), responsiveness (responding within a reasonable timeframe), fairness (providing compensation or solutions), and dignity (respecting users’ time and professional judgment). Forms may vary by industry; principles must exist across industries.

The core of this shock absorber system is humanistic—its design starting point is “what do humans need when encountering uncertainty”: to be informed (transparency), to be responded to (responsiveness), to be compensated (fairness), to be respected (dignity). These four elements constitute the four core components of the shock absorber.

User Trust = f(Product Stability, Service Shock Absorption). When product stability is low, service shock absorption must be high—their product determines the final quality of user experience. Aviation, finance, and healthcare have proven this formula through decades of practice. The AI industry is the only major service industry where both product stability and service shock absorption are simultaneously extremely low.

Trustpilot 1.6: The Disaster Scene After the Shock Absorbers Were Removed

As of March 25, 2026, the Trustpilot ratings of the three major AI platforms are nearly identical—all between 1.2 and 1.6. It should be noted that Trustpilot has a negative selection bias (satisfied users rarely leave reviews); ChatGPT’s App Store rating is 4.8. But Trustpilot concentrates the voices of precisely the paying power users and technically sophisticated users—the core revenue-generating cohort for the AI industry. Their anger is not statistical noise; it is signal.

Re-analyzing the three companies’ failures through the “shock absorber” framework reveals a consistent pattern—each faces different types of product instability, but the structure of shock absorber absence is identical:

Anthropic / Claude—facing “quota instability,” no shock absorption. Quota consumption accelerates nonlinearly; on March 23, 2026, numerous users reported quotas jumping from 52% to 91%. What should the shock absorber be? Real-time consumption breakdowns, anomaly alerts, fast-track human support. What was actually provided? AI chatbot loops. One Max user sent 15 emails in a week with zero human response. Trustpilot reviews were deleted; Discord critics were banned. There wasn’t just no shock absorber—a wall was installed where the shock absorber should be.

OpenAI / ChatGPT—facing “product continuity instability,” no shock absorption. GPT-4o was retired with only two weeks’ notice; two years of user interaction relationships reduced to zero. What should the shock absorber be? Adequate transition periods, conversation history migration tools, capability comparisons with replacement models. What was actually provided? CEO’s broken promise, no migration path, silent model downgrade. A user with 40 years of software testing experience: “As of March 2026, ChatGPT doesn’t even meet beta software quality.”

Google / Gemini—facing “capability promise instability,” no shock absorption. “Dynamic limits” rendered advertised quotas meaningless; image generation capped at just 10–15 per day (users expected thousands). What should the shock absorber be? Real-time transparent display of dynamic limits, alternative guidance when limits are reached, honest explanation of the gap between advertising and reality. What was actually provided? A system message saying “try again tomorrow.” Reddit community consensus: limits coincided with the Ultra premium plan launch, “more like a deliberate strategy to force upgrades.”

| Company | Core Instability Type | Required Shock Absorber | Actually Provided | Consequence |

|---|---|---|---|---|

| Claude | Nonlinear quota consumption | Real-time breakdown + alerts + human channel | AI chatbot + bans | Mass user downgrade/migration |

| ChatGPT | Product continuity rupture | Transition + migration tools + honest communication | Broken promise + silent downgrade | GPT-4o retirement trust crisis |

| Gemini | Capability promise vs. reality gap | Dynamic transparency + alternatives + honesty | “Try again tomorrow” | Community labels it “forced upgrade” |

Three companies facing different types of product instability, yet their responses display striking isomorphism: no transparency, no responsiveness, no compensation, no dignity—all four core shock absorber components absent. A Trustpilot score of 1.6 is not a rating for “poor service”—it is a rating for “shock absorbers at zero.”

The Engineer’s Operating System Has No Variable Called “Human”

AI companies did not remove shock absorbers because they lack funding—Anthropic has tens of billions in financing, OpenAI has Microsoft’s backing, Google owns the world’s largest infrastructure. Not because they lack talent—these three companies employ the world’s smartest engineers and researchers. Not because they lack technology—they can build the most complex AI systems in human history.

The root cause is that these companies were created by engineers, managed by engineers, and have their success criteria defined by engineers. In the “operating system” of engineer culture, “product” equals “model.” All critical resources—funding, talent, management attention—are directed toward improving model capabilities: RLHF alignment, context window expansion, inference speed optimization, benchmark rankings. The fact that “a user sent 15 emails with no response” simply does not exist in this evaluation system.

This is not malice; it is a blind spot. The implicit assumption of engineering thinking is: “If the product is good enough, users will come.” But “good enough” in the engineer’s definition refers only to model capability—while the user’s definition includes the entire chain from purchase to usage to encountering problems to receiving help. The chasm between these two definitions is where the shock absorber vanishes.

More ironically, AI companies’ customer service teams are likely using AI to handle customer complaints. An AI company using its own unstable AI to respond to user complaints about AI instability—this creates a perfect recursive irony: the impact produced by instability is “handled” by an equally unstable system, resulting in a double impact.

From a humanistic perspective, this is “de-humanization”—not only are users not seen as “humans” in the company’s eyes (but as “compute consumers”), even customer service itself has been “de-humanized” (machines replacing humans). When “human” does not exist as a variable in the company’s underlying operating system, you cannot fix the problem by increasing budgets—because budgets will be allocated to “models” rather than “people.” What needs rewriting is not the budget sheet but the operating system.

Steve Jobs said: “Start with the customer experience and work backward toward the technology.” AI companies’ practice is: “Start with the benchmark and work backward toward the release date.” The difference between these two cultures is not in execution but in worldview. One worldview includes humans; the other does not. The shock absorber was not removed—it was never designed in.

Geek vs. Geek: Why Traditional Deflection Strategies Completely Fail in the AI Industry

If AI companies’ users were ordinary consumers, the absence of shock absorbers might still be partially masked by brand halo and marketing rhetoric. But the power users of AI products are precisely the world’s hardest group to deceive: software developers, AI researchers, tech entrepreneurs, data scientists.

These people can not only discover problems but precisely locate them. When token consumption is anomalous, they capture logs, calculate token counts, cross-reference API documentation, and file bug reports on GitHub with complete reproduction steps. When models are silently downgraded, they design comparative tests to verify. Analysis shows that a single user-visible command in Claude Code may generate 8 to 12 internal API calls—these users know exactly what that means.

This is the “Geek vs. Geek” conflict: supply-side engineers think “users don’t understand technical constraints,” while demand-side engineers think “you don’t respect my judgment.” Both speak the same language yet are on completely different channels—because one operates within an engineering mindset while the other thinks within a consumer rights framework.

This conflict structure amplifies the consequences of absent shock absorbers exponentially. Ordinary users encountering problems may just feel dissatisfied and fall silent. Technical users encountering problems will: precisely quantify the issue → open a GitHub Issue → post a detailed analysis on Reddit → tag the CEO on X → write an evidence-backed long review on Trustpilot → give interviews to The Register. One unbuffered negative experience, through a technical user’s public-domain amplification capability, can influence thousands of potential paying users’ decisions.

Hallucination/false positive/quota anomaly

No response/no compensation

Logs/token calculation

GitHub/Reddit/X

1 person affects 10,000

The explanatory power of the “Geek vs. Geek” conflict model extends beyond the AI industry. It applies to all scenarios where “professionals serve professionals”—premium healthcare (doctors serving doctors), professional legal services (lawyers serving lawyers), enterprise SaaS (engineers serving engineers). But in those industries, service providers long ago learned to respect client expertise: Salesforce conducts biannual employee satisfaction surveys and publishes results, Slack uses user recommendation rates rather than new sign-ups as its core metric, and the top B2B SaaS player Nutanix achieved an NPS of 92. The AI industry is the only “expert-serving-expert” sector that neither respects client expertise nor builds systematic feedback loops.

AI companies face a double dilemma: the most unstable product + the hardest users to fool. Absent shock absorbers are a problem in any industry, but in the AI industry it is a catastrophe—because the victims happen to possess the strongest diagnostic capabilities and the widest public dissemination channels. Every unbuffered impact does not disappear; it is amplified.

When the Dam Breaks: From Individual Complaints to Industry-Wide Credibility Crisis

User tolerance is like a reservoir. Each positive experience adds water (trust increment); each unbuffered negative experience is inflow (trust depletion). As long as the storage rate exceeds the inflow rate, the reservoir is safe. But when inflow consistently exceeds storage—when the accumulation rate of negative experiences surpasses the trust repair rate—the water level rises continuously until the dam breaks.

The key insight is that dam failure is not triggered by a single particularly severe event, but by the cumulative sum of individually “non-fatal” negative experiences. This is why AI product managers are often baffled: “We didn’t do anything particularly outrageous—why did users suddenly erupt?” Because they see only the last drop of water, not the reservoir already full to the brim.

The collective eruption of Claude Max users on March 23, 2026 was a textbook case of tolerance flooding: it was not that something unprecedented happened on March 23, but rather that since the introduction of weekly quotas in August 2025—opaque quotas, anomalous consumption, unresponsive support, suppressed feedback—these individually “non-fatal” impacts had accumulated for eight months, finally detonating when quotas jumped from 52% to 91%.

The cost of post-flood repair far exceeds the cost of prevention. Anthropic launched a two-week double-quota promotion on March 13, 2026—equivalent to “emergency floodgate release.” But emergency release can only temporarily lower the water level; it cannot solve the upstream inflow problem. Without a shock absorber system, the next flood is only a matter of time.

More dangerously, flooding is cross-brand contagious. When all three major platforms simultaneously sit at Trustpilot 1.6, the damage extends beyond individual brands to the entire AI industry’s credibility. When potential paying users search for reviews of any AI product, they encounter industry-wide negative feedback—their conclusion is not “this one is bad, I’ll switch to another” but “AI products as a whole are not worth paying for.” This is the mechanism by which negative feedback blocks the entire AI industry’s development.

Once tolerance flooding spreads from “individual brands” to “the entire industry,” it escalates from a commercial problem to an industry crisis. When the public forms the collective perception that “AI products are not worth paying for,” the victim is not a single company but every AI company attempting commercialization. Trustpilot 1.6—three platforms, same score—is evidence that industry-level flooding is underway.

The Commercial Return of Humanism: How Apple Built a Moat with Shock Absorbers

Structural differences exist between Apple and AI companies—the iPhone’s marginal service cost is lower than AI’s real-time GPU cost, Apple’s product stability is far higher than AI products, and Apple’s ecosystem lock-in is far stronger than AI’s near-zero switching costs. This paper fully acknowledges these differences. But precisely these differences make Apple’s case more instructive—because what Apple proves is not “perfect products lead to success” but “even when products have problems, excellent shock absorber systems can convert problems into trust.”

iPhones also freeze, batteries also degrade, systems also slow down after updates. But Apple has built a complete shock absorber system around these imperfections:

Genius Bar—face-to-face expert support, bypassing AI chatbots. 24-hour callback—after NPS surveys identify dissatisfied customers, a dedicated person contacts them within 24 hours. Employee NPS—employee satisfaction surveyed every four months, because dissatisfied employees cannot deliver satisfying service. Immediate feedback loop—surveys sent immediately after purchase; store managers use feedback to prepare callbacks.

The four components of this system—transparency (telling users what happened), responsiveness (responding within reasonable time), fairness (providing compensation or solutions), dignity (treating users with the respect due to a person)—correspond precisely to the four core functions of a shock absorber.

Apple’s moat is fundamentally not a technology moat—Qualcomm can also make chips, Android can also make operating systems. Apple’s moat is a relationship moat built around “humans”: ten years of photos in iCloud, social networks in iMessage, health data on Apple Watch, habitual reliance on AirDrop. These moats share a common humanistic essence—relationships between humans and memories, humans and habits, humans and trust. Technology can be replicated; relationships cannot.

Apple’s NPS 61 and 89% retention are not because the iPhone is flawless. It is because when the iPhone has a problem, there is a Genius Bar to catch you, a 24-hour callback to reassure you, transparent communication to respect you. Apple transforms “the moment a product fails” into “an opportunity to strengthen trust.” AI companies transform the same moment into “an event that destroys trust.” The gap is not in technology budgets; it is in humanistic philosophy.

Without Humanism, No Moat, No Premium

First, confirming a fact: AI companies have virtually no durable technology moat. Model capability? Benchmark rankings refresh every few months. Training data? Sources highly overlapping. API interfaces? Formats nearly universal, migration cost near zero. One developer demonstrated: “Migration was easy—I just copied CLAUDE.md to GEMINI.md.” Brand recognition? Against a backdrop of Trustpilot 1.6, brands are shifting from asset to liability.

Apple’s pricing power formula:

Technical Capability × Service Shock Absorption × Trust Accumulation = Pricing Power (Premium)

All three variables positive; product is positive; premium holds.

The AI industry’s actual formula:

Technical Capability × Service Shock Absorption (≈0) × Trust Accumulation (≈0) = Pricing Power (≈0)

Two variables approach zero; no matter how large technical capability is, the product still approaches zero.

This is why the $200/month Max 20x plan cannot generate Apple-style brand loyalty—users are not paying a “trust fee” but placing a “bet.” The community has shown clear signals: users downgrading from Max 20x to 5x, switching from subscriptions to pay-per-use API, moving from commercial products to open-source models. More dangerous is the “multi-subscription hedging strategy”—simultaneously paying Claude, ChatGPT, Gemini, and Perplexity, using diversification instead of trust. Not one company has earned a trust-based commitment.

From a humanistic perspective, willingness to pay is fundamentally a trust behavior—”I believe you will continue to provide me value.” When trust is repeatedly eroded by opaque metering, unresponsive support, and suppressed feedback, the collapse of willingness to pay is not a market behavior but the inevitable consequence of a trust violation. Without humanism, trust cannot be built; without trust, premiums cannot be sustained; without premiums, AI industry commercialization is a cash-burning perpetual motion machine.

Installing the Shock Absorbers: A Survival Guide for AI Companies

Based on the causal chain established above (product instability → need for shock absorption → shock absorbers absent → flooding → no premium), the core prescription is not “hire more support staff”—a company whose operating system has no variable called “human” will not allocate new budgets to humans. What needs changing is the operating system itself.

Prescription 1: Transparency shock absorption—let users see what is happening. Real-time quota consumption breakdown (like a phone bill), model version change announcements (like software update logs), public issue tracking dashboard (like GitHub’s Issue Tracker). Transparency does not eliminate problems, but transparency prevents problems from escalating into suspicion.

Prescription 2: Responsiveness shock absorption—respond with a real human within a reasonable timeframe. Technical issues from paying users must receive a human response within a defined SLA window. Apple’s 24-hour callback mechanism can be directly adopted. Using AI to assist human support is fine; using AI to replace human support is not—at least not while the AI product itself remains unstable.

Prescription 3: Fairness shock absorption—have a compensation mechanism when things go wrong. Anomalous quota consumption should have a credit-back policy, safety false positives interrupting workflows should have a fast-track human review channel, and model retirements should have adequate transition periods and migration tools. The aviation industry’s delay compensation system offers a reference.

Prescription 4: Dignity shock absorption—stop suppressing users’ voices. Do not delete Trustpilot negative reviews. Do not ban Discord critics. Do not mark tickets as “resolved” when issues remain open. User anger is not noise—it is your most valuable product improvement signal.

Prescription 5 (Organizational): Establish a Chief Experience Officer (CXO), co-equal with the CTO. Include user satisfaction in product team performance evaluations. Conduct quarterly employee NPS surveys. Experience is not a subordinate of technology—it is an independent dimension parallel to technology.

Prescription 6 (Ultimate): Shift from “selling compute” to “selling trust.” The ultimate business model for AI is not per-token billing but per-trust billing—users pay for “certainty” and “the feeling of being respected.” This requires fundamentally redefining “product”: the product is not the model; the product is “the complete relationship between humans and AI.”

Implementation path recommendation: The six prescriptions should not be pursued simultaneously but ordered by “urgency vs. implementation difficulty”: First priority—transparency shock absorption (real-time quota breakdown, issue tracking dashboard), lowest implementation cost, most immediate trust-repair effect, no organizational restructuring required, can go live within one quarter. Second priority—responsiveness (human support SLA), requires staffing but low technical difficulty. Third priority—fairness (compensation policy design), requires cross-department coordination. Fourth priority—dignity (stop suppressing user voices), requires management culture shift. Fifth priority—organizational architecture (CXO + performance systems), requires CEO decision. Sixth priority—ultimate transformation (selling trust), requires board-level strategic consensus. The first step determines the credibility of all subsequent steps—if you cannot even achieve transparency, no other promise will be believed.

Without Humanism, There Is No Future for AI

This paper’s causal chain:

(1) AI products at the current technological stage possess inherent instability, divided into architectural instability (average hallucination rate 9.2%; a 2025 mathematical proof confirmed it cannot be fully eliminated) and operational instability (nonlinear quotas, safety false positives, silent model replacement—technically solvable but companies choose not to). Global economic losses from AI hallucinations reached $67.4 billion in 2024.

(2) Inherently unstable products require robust service systems as “shock absorbers”—transparency, responsiveness, fairness, dignity—to convert unstable technology into stable user experience. Aviation, finance, and healthcare have proven this necessity through decades of practice.

(3) AI companies systematically removed shock absorbers at the peak of instability—customer service replaced by AI chatbots, feedback suppressed, users treated as “compute consumers.” The root cause is that “human” does not exist as a variable in engineer culture’s operating system.

(4) The “Geek vs. Geek” conflict structure amplifies the consequences of absent shock absorbers exponentially—technical users precisely quantify problems and disseminate them as high-quality public-domain content, where one unbuffered impact can influence thousands of potential paying users.

(5) Cumulative negative feedback triggers “tolerance flooding”—escalating from individual complaints to an industry-wide credibility crisis. All three platforms at Trustpilot 1.6 is evidence that industry-level flooding is underway. The public is forming a collective perception that “AI products are not worth paying for.”

(6) Apple’s 40 years prove that the shock absorber is the moat—NPS 61, 89% retention, 96.4% repurchase intent, not because the product is perfect but because when the product fails, someone catches you. Its moat is fundamentally humanistic: the relationship between humans and trust is non-replicable.

(7) AI companies have no durable technology moat (models replicable, data overlapping, APIs universal). The only non-replicable moat is the service relationship built around “humans”—and this is exactly what the industry collectively lacks. With “service shock absorption” and “trust accumulation” approaching zero in the pricing formula, no amount of technical capability can sustain a premium.

(8) An AI industry without humanism will never command premium pricing or sustained payment. This is not rhetoric; it is mathematics—when there is a zero in multiplication, the product is zero. The first company to write “human” into its operating system will earn an Apple-grade commercial moat. But in an industry created, managed, and funded by tech geeks, this requires not merely strategic adjustment but a civilizational choice.

A final question: your product is inherently unstable, your users are the world’s hardest-to-fool technical experts, your competitors can replicate your model capability within three months, your shock absorbers have been removed, and your reservoir is overflowing—under these conditions, what justifies asking users to keep paying?

If the word “human” is not in your answer, you are not running a company; you are running a countdown.

And the smallest unit of implementation for the word “human” is replying to those 15 emails.

- Trustpilot. “ChatGPT Reviews.” trustpilot.com/review/chatgpt.com (accessed March 25, 2026). Rating: 1.6/5, ~3,000 reviews.

- Trustpilot. “Claude Reviews.” trustpilot.com/review/claude.ai (accessed March 25, 2026). Rating: 1.6/5, ~800 reviews.

- Trustpilot. “Gemini Reviews.” trustpilot.com/review/gemini.google.com (accessed March 25, 2026). Rating: 1.6/5, ~556 reviews.

- Trustpilot. “OpenAI Reviews.” trustpilot.com/review/openai.com (accessed March 25, 2026). Rating: 1.2/5, ~968 reviews.

- Vectara. “Hughes Hallucination Evaluation Model (HHEM) Leaderboard.” github.com/vectara/hallucination-leaderboard (updated December 2025). Best model: 0.7%, average: 9.2%.

- AboutChromebooks. “AI Hallucination Rates Across Different Models 2026.” (February 2026). Global losses: $67.4B in 2024; mathematical proof of structural inevitability.

- Suprmind. “AI Hallucination Statistics: Research Report 2026.” (March 2026). MIT finding: 34% more confident language during hallucination.

- Columbia Journalism Review. “AI Hallucination Source Attribution Test.” (March 2025). Grok-3: 94% hallucination rate; paid models worse than free.

- Stanford University. “Legal Hallucination Study.” LLMs fabricated 120+ non-existent court cases with realistic details.

- GitHub. “Weekly Usage Limits Making Claude Subscriptions Unusable.” Issue #11810, anthropics/claude-code (November 2025).

- GitHub. “Instantly hitting usage limits with Max subscription.” Issue #16157, anthropics/claude-code (January 2026).

- GitHub. “Claude Max plan: Rate limit reached with 38% weekly usage remaining.” Issue #28450, anthropics/claude-code (February 2026).

- PiunikaWeb. “Claude Max subscribers left frustrated after usage limits drained rapidly.” (March 24, 2026).

- The Register. “Claude devs complain about surprise usage limits.” (January 5, 2026).

- Medium / All About Claude. “Claude Weekly Limits Are Still Broken.” (March 2026).

- Hostbor. “Google Today Introduced Hard Limits to Gemini.” (June 2025).

- OpenCraftAI. “Anthropic’s Usage Limits: Why Power Users Are Walking Away.” (December 2025).

- SQ Magazine. “Apple Customer Loyalty Statistics 2026.” NPS: 61, Loyalty: 89%.

- Comparably. “Apple NPS & Customer Reviews.” CSAT: 85%.

- CustomerGauge. “7 Apple NPS Score Benchmarks in 2025.” Average NPS: 61.

- XtendedView. “Apple Customer Loyalty Statistics 2025.” Ecosystem retention: 79%, Millennial repurchase: 96.4%.

- SurveySensum. “8 Lessons From Apple NPS.” 24-hour detractor callback policy; quarterly eNPS.

- Zonka Feedback. “NPS Scores by Company: 2026 Benchmarks.” Nutanix NPS: 92; only 3% of companies score above 70.

- CustomerGauge. “38 SaaS NPS Benchmarks.” Slack: referral as core metric; Salesforce: biannual employee surveys with published results.

- Stanford Institute for Human-Centered AI (HAI). “Mission Statement.” hai.stanford.edu (2026).

- Carnegie Mellon University HCII. “Human-Centered AI Research.” hcii.cmu.edu (2026).

- HumanX 2026 Conference. “Human-Centered Design & Ethical AI.” San Francisco, April 6-9, 2026.

- Anthropic Help Center. “Claude March 2026 usage promotion.” Double usage off-peak, March 13-28, 2026.